For 18 years, I have been working at DPS on projects for our customers involving host and mainframe systems – particularly in the area of securities settlement. Based on this experience, it is clear to me that T+1 is no ordinary regulatory project. It is a stress test for mature core systems.

The fourth part of our T+1 series therefore focuses on the technical basis of the changeover. After scoping, dependencies and implementation issues, the focus now shifts to legacy IT. The high-level roadmap of the EU T+1 Industry Committee defines a clear target: extensive automation, high STP rates, compressed post-trade time windows and clearly timed settlement cut-offs. This is precisely where it will be decided whether existing host systems can keep up.

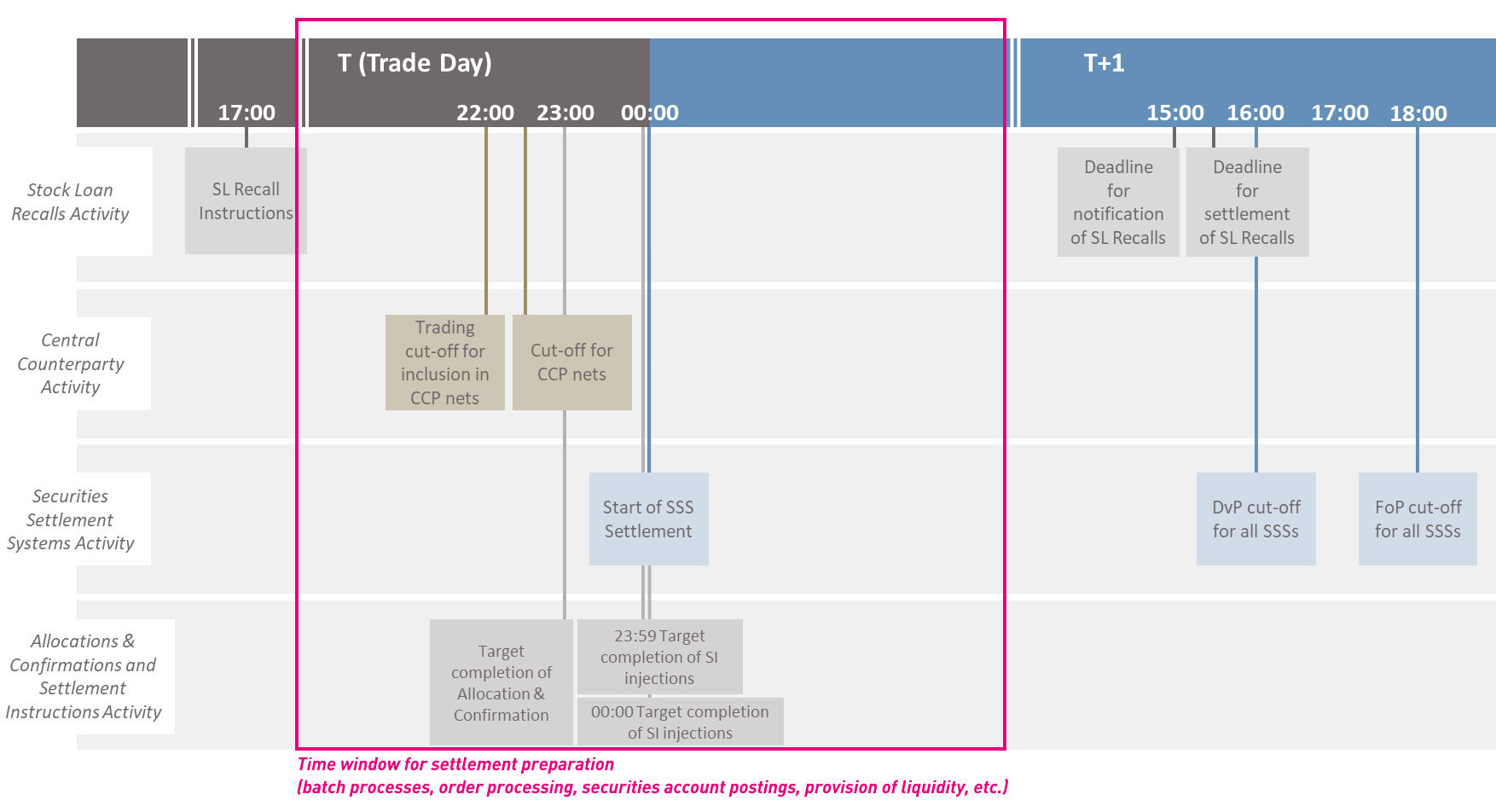

T+1 not only shortens settlement cycles. It also condenses the entire post-trade chain – from end-of-day netting to matching & confirmation to final book entry on the securities account side. In practice, this means that trading hours are extended and processing times are shortened.

End-of-day processing as a critical path

The processing and batch times in end-of-day processing are crucial. Orders must be settled, confirmed, forwarded internally and booked on the securities account side after the close of trading. Capital measures processing and master data runs run in parallel.

This mass data processing takes place predominantly on the mainframe – often in COBOL or assembler programmes with IMS or DB2 databases. The time window between the end of trading and system start-up is becoming narrower with T+1. At the same time, volume and complexity are increasing.

The problem here is less the average utilisation than the concentration of peak loads in the batch windows. Job chains with sequential dependencies that have grown historically become the critical path under T+1. If settlement instructions have to be processed in time for clearing and CSD, internal posting must not become a bottleneck.

Realistic optimisation instead of architectural revolution

The discussion often brings up a complete real-time transformation – event-driven architectures, near-real-time processing or the move away from mainframes. With a view to 2027, this is not realistic for many institutions.

Instead, the focus is on robust optimisation of existing systems.

- A key lever is the reduction of job runtimes. This includes code optimisations, more efficient database access, analysis of I/O load and the elimination of redundant processing steps. In IMS environments in particular, significant effects can be achieved by adjusting access paths or commit strategies.

- A second approach is additional computing capacity – for example, by purchasing MIPS to cushion peak loads. This is possible, but costly and not a sustainable solution.

- The decisive lever often lies in batch orchestration. Not every processing task has to run strictly sequentially. Load peaks can be smoothed out through parallelisation, prioritisation and targeted resource control. Modern workload management concepts make it possible to dynamically move non-critical jobs and stabilise the settlement-relevant path.

Keeping an eye on hybrid architectures

Added to this is the increasing hybridisation of the application landscape. Mainframe systems interact with middleware, decentralised applications or cloud components.

Under compressed settlement windows, latencies between these systems become more noticeable.

T+1 therefore requires not only faster batch runs, but also a clear end-to-end understanding of the entire post-trade process chain – from STP quotas in trade matching to consistent inventory management in the core system.

Expertise is also a limiting factor. Performance optimisation in z/OS environments or in assembler-related components requires specialised platform knowledge. This expertise is not freely scalable. Those who recognise the need for optimisation too late will find themselves under considerable time pressure.

My clear conclusion: T+1 is not an architectural restart. It is a stress test for existing core systems. The decisive factor is whether institutions can control their end-of-day and batch processing in such a way that settlement cut-offs are met, clearing processes are supported and inventories are managed consistently.

The mature IT architecture of securities institutions is therefore not a thing of the past, but can continue to function as the operational backbone of securities settlement. And while T+0 may require a genuine real-time transformation, T+1 is decided by a much more pragmatic question: how robust is your batch orchestration?